This is part 2 of a 3-part blog series on Logistic Sensor Packaging. For more information, read Part 1.

As a technical discipline, robotics relates to the development, design and manufacture of machines capable of carrying out a range of automated behaviors. Almost as old as recorded history, these began with devices created by the ancient Greeks and Egyptians, to da Vinci’s robot, to the vast scale and complexity of contemporary, mega-scale warehouses, capable of assembling a 50-item grocery order in under six minutes with 100 per cent accuracy. As for cobots, these are co-operative robotics specifically designed to work with people in the fulfillment of their function, oftentimes against the backdrop of other, fully automated processes. In these environments think of cobots as intermediaries making machines and people more efficient. If we are familiar with the concept of automation the similar sounding word autonomy is far more nuanced and revolutionary, pertaining as it does, to machine learning and various degrees of being able to rewrite its own programming. Where an automated process was once no more able than the software engineer who programmed it, and machines that ran into problems had to wait for a human to sort them out, autonomy means “more able than the programmer” and processes that negotiate their own way through problems—no help needed.

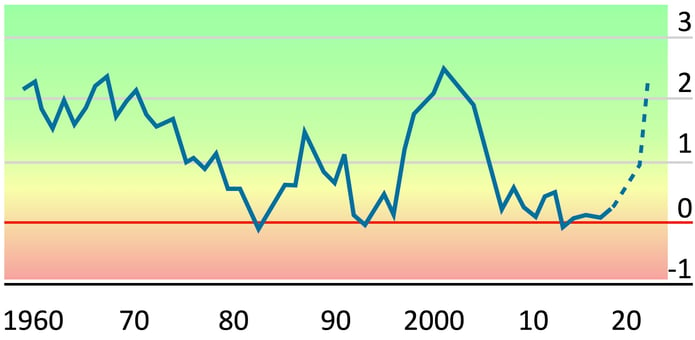

Figure 2.

United States, Private Sector Productivity. Since the economic crisis, productivity growth in OECD countries has stagnated and attempts to change the situation alluded economists, industrialists and politicians alike. As the London Times speculated at the end of 2020, the pandemic may have had an unexpected and positive impact where other attempts have failed. The reason? “Companies have been forced to embrace a new range of technologies and working practices hitherto stymied by orthodoxy, lethargy or reluctance.”

Since the economic crisis, productivity growth in OECD countries has stagnated and attempts to change the situation alluded economists, industrialists and politicians alike. As the London Times speculated at the end of 2020, the pandemic may have had an unexpected and positive impact where other attempts have failed. The reason? “Companies have been forced to embrace a new range of technologies and working practices hitherto stymied by orthodoxy, lethargy or reluctance.”

While these concepts can be stated simply, the speed and impact of their application over the past 18 months is surprising. One fact especially underscores this: the part played by cobotic/robotic automation in markedly raising productivity. As Figure 2 shows, this has been the West’s missing “holy grail” since the financial crash of 2008. The idea that COVID has stunted economic growth could not be further from the truth; it has been a shot in the arm. Headline GDP figures are extremely misleading. Remove sectors such as travel, tourism and live events, and the world economy is experiencing rapid growth across many sectors, including, as we are now explaining, robotics.

Robotics and Sensors

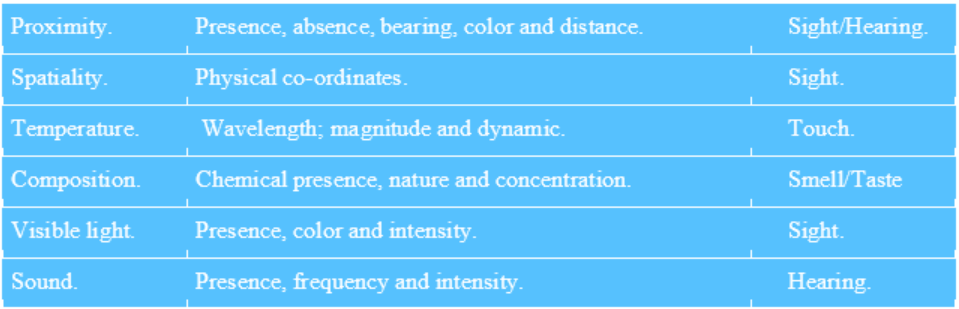

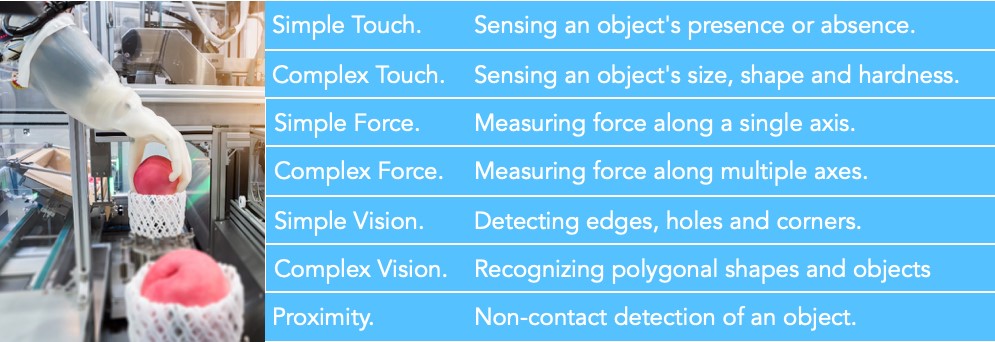

Figures 3 and 4 provide a diagrammatic overview of the types of application to which the functioning of robots/cobots are most suited, together with the variety of sensors needed in each case. While these cover a wide range of industrial, service, medical, agricultural and domestic uses, we will focus on warehouse and logistics. This is where some of the most interesting aspects of technical evolution are taking place, not least as regards device integration, extending legacy applications, and meeting packaging and other challenges.

Figure 3.

Principle factors shaping robotic development To better understand this evolution, we need first grasp the basic fundamentals shaping general technical direction: (i) global megatrends, as we have already considered, (ii) environment, (iii) task, (iv) machine intelligence, and, (v) level of human interaction. These factors both sharply delineate the parameters of future needs, and, in turn, give us some sense of the pace of change.

To better understand this evolution, we need first grasp the basic fundamentals shaping general technical direction: (i) global megatrends, as we have already considered, (ii) environment, (iii) task, (iv) machine intelligence, and, (v) level of human interaction. These factors both sharply delineate the parameters of future needs, and, in turn, give us some sense of the pace of change.

Environment refers to theaters of operation. The latter vary from small, specialized machine shops to 30,000 m² warehouses, from military fields of engagement, to the sterile requirements of operating room or ICU, to ISO:1 Clean Rooms. This list helps in our understanding of the plethora of tasks concomitant to varying levels of autonomous intelligence, degree of machine learning, and dependency on human participation. Each potentially complex, the defining parameters of this entanglement are eventually distilled into four basic factors: technical capability, efficiency, human safety and commercial cognizance.

The last two follow as a matter of course, once tasks and efficiencies have been defined. “Efficiencies” themselves meld technical elements of optimum function with commercial sustainability. For cobots and autonomous robots, however, safety is paramount; thus, it is necessary to carefully anticipate every potential danger, contact or interaction. In practical terms, the six fundamental sensor types listed below and the algorithms giving pragmatic usefulness to the data they collect lie at the heart of securing this safety.

Figure 4.

Robotic Sensors, Classification by Biological Corollary. As Figure 4 indicates, the truly fundamental nature of these sensors is demonstrated in that they parallel their five human equivalents; sight, hearing, touch, smell and taste. In parallel, robotic software algorithms anticipate the functioning of the biological brain; hence the pursuit of advanced algorithms, which in turn contributes to our growing levels of expectation as to what these devices will contribute to global technical, commercial, health and social wellbeing over the next half-century.

As Figure 4 indicates, the truly fundamental nature of these sensors is demonstrated in that they parallel their five human equivalents; sight, hearing, touch, smell and taste. In parallel, robotic software algorithms anticipate the functioning of the biological brain; hence the pursuit of advanced algorithms, which in turn contributes to our growing levels of expectation as to what these devices will contribute to global technical, commercial, health and social wellbeing over the next half-century.

In taxonomic terms, the basic representation of categories cited in the table are only starting points to an increasingly complex tree of subgenus and “specie” specialization. Using the example of ocular vision, the technical requirements for detecting the presence, edge or other basic element becomes magnitudes of order more complex with reductions in scale or when working with or in the presence of people; as equally, physical complexity and polygonal structures, of which human physiology must be the epitome. Accurate recognition is important in microelectronic packaging scenarios, but working with people on the battlefield or in a hospital, it rapidly becomes a matter of life or death.

While Figure 5 does not elaborate on these complexities, it does provide orientation to extrapolate the fields of sensor operation, manufacturing, operational and servicing needed to meet anticipated evolutions in sensor technology. It also points to what elements are currently driving new product introduction (NPI).

Figure 5.

Sensor Type and Application. In short, the value of these tables is that they guide us in anticipating future demand from within the robotic ecosystem, from recognizing potential market requirements, to allowing vendors in the market to produce technical roadmaps. That, or anticipate future new technologies where they do not exist today. The latter, of course, has particular commercial importance. Far away, the most significant dynamic is the impact of sensor interaction channeled through advanced software algorithms. In the same way that the five human senses combine to effectively create close to 20 or more perception levels, in this electro-mechanical world that number is very much larger, and thanks to AI, growing exponentially.

In short, the value of these tables is that they guide us in anticipating future demand from within the robotic ecosystem, from recognizing potential market requirements, to allowing vendors in the market to produce technical roadmaps. That, or anticipate future new technologies where they do not exist today. The latter, of course, has particular commercial importance. Far away, the most significant dynamic is the impact of sensor interaction channeled through advanced software algorithms. In the same way that the five human senses combine to effectively create close to 20 or more perception levels, in this electro-mechanical world that number is very much larger, and thanks to AI, growing exponentially.

Looking only as far ahead as 2025, it is impossible to imagine any manufacturing plant, logistics center, service or travel hub without higher levels of autonomous processes—including the presence of cobots. The only question is number, sophistication and trajectory of growth. Yet even here we benefit from solid indicators; sectors explicitly serving the supply chain and logistics will grow much faster, as will those serving autonomous drive, drones (military and logistic drones especially), defense and security, healthcare, and, some areas of manufacturing.

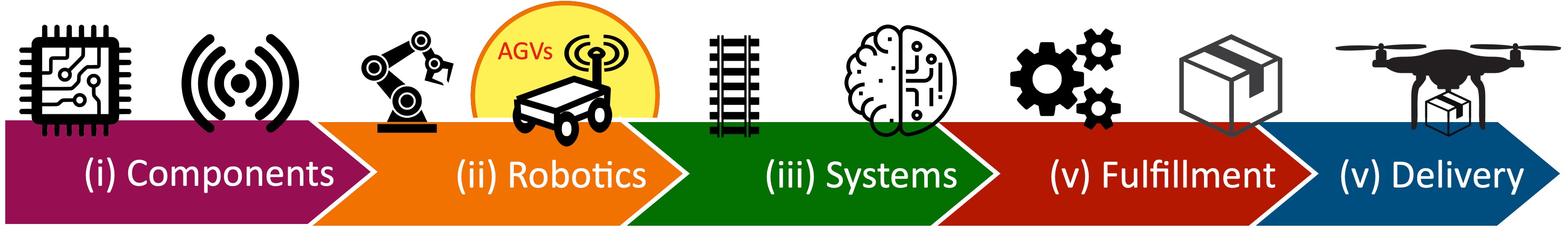

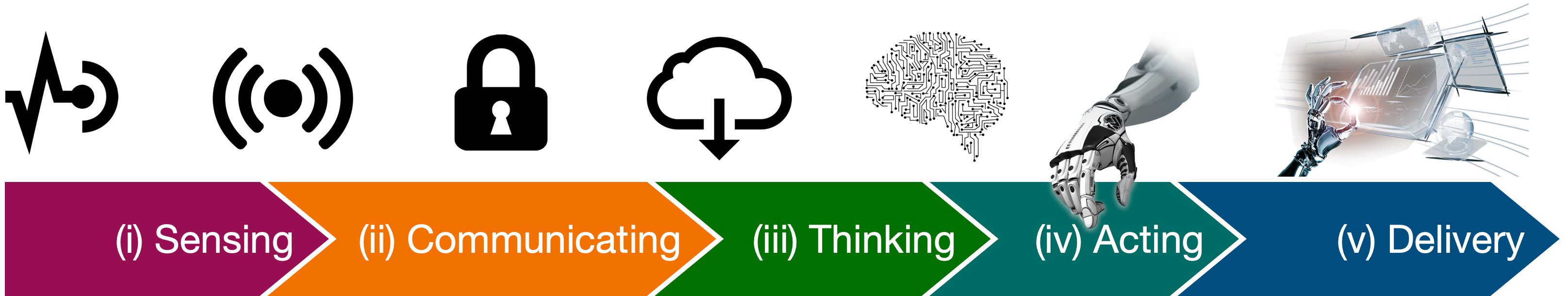

Figure 6.

Elements in Automated Autonomous Robotic Logistics. Yet for some years now, one of the biggest arenas of potential impact, literally, is at global freight air terminals and seaports, a development that has now sped up considerably. For example, while the roll-out of full automation has been underway since 2015 for the ports of Rotterdam and Dubai, the past eighteen months has seen five additional projects announced. Particularly ambitious are the plans for Singapore, Tianjin (China), and Newcastle (Australia). Completion dates between 2030 and 2050 should be interpreted as reflecting the extensive technical ambition of the projects, their physical scale and their ability to exploit future terahertz communications and round-the-corner vision, as well as the vast potential of various quantum devices.

Yet for some years now, one of the biggest arenas of potential impact, literally, is at global freight air terminals and seaports, a development that has now sped up considerably. For example, while the roll-out of full automation has been underway since 2015 for the ports of Rotterdam and Dubai, the past eighteen months has seen five additional projects announced. Particularly ambitious are the plans for Singapore, Tianjin (China), and Newcastle (Australia). Completion dates between 2030 and 2050 should be interpreted as reflecting the extensive technical ambition of the projects, their physical scale and their ability to exploit future terahertz communications and round-the-corner vision, as well as the vast potential of various quantum devices.

Table 6 shows the principle elements characterizing a typical autonomously advanced warehouse operation, but from the perspective of this article provides a solid point to extrapolate future device needs in autonomous/automated airports and seaports. In the case of a warehouse, the back-end consists of components, sensors and the robots’ manufacture, while the front-end comprises the hard and software elements that mark the operating infrastructure, including those elements that facilitate automated collection, sorting and delivery.

Compared to the use of robotics in automobile manufacturers, this represents advanced logistics taken altogether to the next level of operation, embracing as it does greater autonomy, dexterity (capability), the use of cobots, M2M communication, advanced algorithms and machine-on-machine learning. In principle there is no limit to scalability, that having been said, the ecosystem still has a long way to go in making it happen—which is why the airport and seaport projects cited above possess added importance, representing as they do, vital arenas for learning, experimentation and new device development. Against this backdrop, Figure 6 should be seen as an iterative loop marking the parameters of innovation.

Figure 7.

Principle Elements in Autonomous Sensor Ecosystem Connectivity. As has been implied, many of the fundamental technologies needed for these next generation airport and seaport operations already exist. The challenges are those of the continued development of AI (for better data synthesis), better multi-sensor simulation capabilities, device adaptation, cost, and build-out, all of which point to some potentially very steep learning curves, but also some exciting times for inventiveness.

As has been implied, many of the fundamental technologies needed for these next generation airport and seaport operations already exist. The challenges are those of the continued development of AI (for better data synthesis), better multi-sensor simulation capabilities, device adaptation, cost, and build-out, all of which point to some potentially very steep learning curves, but also some exciting times for inventiveness.

This excitement particularly extends to AGVs, or automated guided vehicles. The basic idea is of an automated “pallet” that moves inventory from one place to another in support of manufacturing or some other process. AGVs can range in size from a shopping cart to a full-size truck, the latter, self-evidently, clearly central in airport and seaport operations. Historically, AGV movement followed magnetic tracks embedded in the ground, with behaviors directed by other external infrastructures, such as RFID and QR codes, and inherent functionality limited by proximity and other basic sensors; all in all, essentially collect-move-deliver automation. The safety protocols are often no more than stop and wait for the human. Other limitations are those of anything running on tracks, together with support structures which can be costly, constraining, need regular maintenance or protection, and increasingly diverging from wider developments in logistics technologies, such as those represented in our example of advanced grocery packaging and delivery; shifting the “A” from automated to autonomous—no people or external infrastructures needed.

Released from dependency on third-party elements such as tracks or QR codes, an AVG that is able to operate in free-space is axiomatically more useful, not least being able to utilize all available space in any given setting to provide logistical efficiency and time-saving, while performing tasks set by optimum need, rather than predetermined routines. More importantly still, limits on operating areas practically vanish. Finally, it allows AGV technology to benefit from wider improvements in advanced automated logistics, crucially including significant areas of technical crossover. The most important of which are autonomous drive vehicles—which, as we should remind ourselves, covers land, air and sea transport—robotic intelligence and dexterity

Critical to autonomous drive has been the very fast pace of development in 3D vision since 2017, especially as regards smaller size, greater capability and price reduction, as equally, the development of sufficiently sophisticated software algorithms to meld highly diverse sensor data. Simultaneously, software has been developed to integrate data from communications platforms such as 5G and GPS—not to mention the ability to learn, self-diagnose and correct. Collectively, the result has yielded very high levels of obstacle differentiation, categorization, moving object type recognition and trajectory prediction. Equally, the autonomous creation of landmarks for navigation purposes, partial-shape solutions, distinctions between animate and inanimate, all allowing for better safety responses, greater efficiency and (in warehouse settings) much higher productivity (so far in the region of 60%).

As previously noted, the technology currently exists both to support advanced AGVs and enable ambitious rollout, but key developments are ongoing and face at least four critical challenges:

- Costs shifts from infrastructure to the price of the AGV, and for these next generation vehicles, these are pricey and have yet to be commercially optimized.

- Environmental scanning and object learning remains time consuming, especially in large and complex operating arenas—that having been said, exhaustive 3D mapping need only happen once.

- Either AGVs need to be designed to maneuver in a certain amount of space, or that space needs to be designed around the AGV. This process also needs to be optimized.

- Functioning in bad weather or highly varied lighting conditions or in mixed internal environments (hot to cold) presents sensor difficulties, and indeed, solving these in one of the key challenges currently being negotiated in 3D vision development.

Having mentioned the impact of AI earlier, it is a subject to which we must now return in consideration of the development of 3D vision technologies. It is difficult to overstate its impact and importance. Certainly, it is so much more than cleaver software. Simply put, in the same way a Noble laureate represents a great achiever, despite possessing exactly the same senses and physical features as the rest of humanity, so AI can achieve extraordinary advances in technical capability using standard hardware devices, singly, and even better severally, in turn exploiting differing types of sensor data (optical, 3D, IR, radar, etc.). The biggest impact is not only increased overall usefulness and widened application, but shifts in the type of sensor required, moving away single device technical complexity to complementary arrays of standard and cheaper devices—just brilliantly utilized by AI.

Figure 8.

Combining Signals from Multiple Sources can yield Amazing Results. This is perhaps the most important force driving advanced 3D vision. By way of analogy, consider the principle of astronomical interferometry. In 1946, it was realized that in place of achieving high resolution through the construction of a single, large and very expensive radio telescope, quality results could be achieved through combining signals from much cheaper, multiple antennas, set over a large area. Considered revolutionary at the time, it earned the inventor, Sir Martin Ryle, a Noble Prize. Today we can substitute “multiple sensor types” for “antennas” and “AI” for “large area”. The result is already proving more revolutionary, remarkable and more immediately practicable in driving forward 3D vision capabilities free from exorbitant costs.

This is perhaps the most important force driving advanced 3D vision. By way of analogy, consider the principle of astronomical interferometry. In 1946, it was realized that in place of achieving high resolution through the construction of a single, large and very expensive radio telescope, quality results could be achieved through combining signals from much cheaper, multiple antennas, set over a large area. Considered revolutionary at the time, it earned the inventor, Sir Martin Ryle, a Noble Prize. Today we can substitute “multiple sensor types” for “antennas” and “AI” for “large area”. The result is already proving more revolutionary, remarkable and more immediately practicable in driving forward 3D vision capabilities free from exorbitant costs.

If more intelligent use of existing sensor technology facilitates significant advances in 3D vision capabilities by adapting legacy approaches, what is all too easily assumed is that the underlying packaging technology will need no more than a tweak; that it is simply a case of producing these modules utilizing existing methods. Yet, this is not the case at all. Even before the spike in demand created by COVID-19, there were significant and growing pressures to reduce power consumption, lower the cost of manufacture, improve dependability and longevity, not to mention device flexibility and degree of environmental hardiness. Lastly and not least, make them much more power efficient, which usually translates into smaller devices. Indeed, in terms of current packaging solutions, the greatest challenges are found at exactly this intersection.

Download these resources for more information:

| Global Trends in Logistic Sensor Packaging Paper | 3880 Die Bonder Brochure | Innovation Center USA Brochure | SST 3150 High Vacuum Furnace Brochure |

|

|

|

|

----

Kyle Schaefer

Product Marketing Manager

Palomar Technologies, Inc.

Dr. Anthony O'Sullivan

Palomar Technologies

Strategic Market Research Specialist